Loading blog...

Synthetic Data in Market Research: The Promise Is Real. So Are the Risks. Here Is Where We Stand.

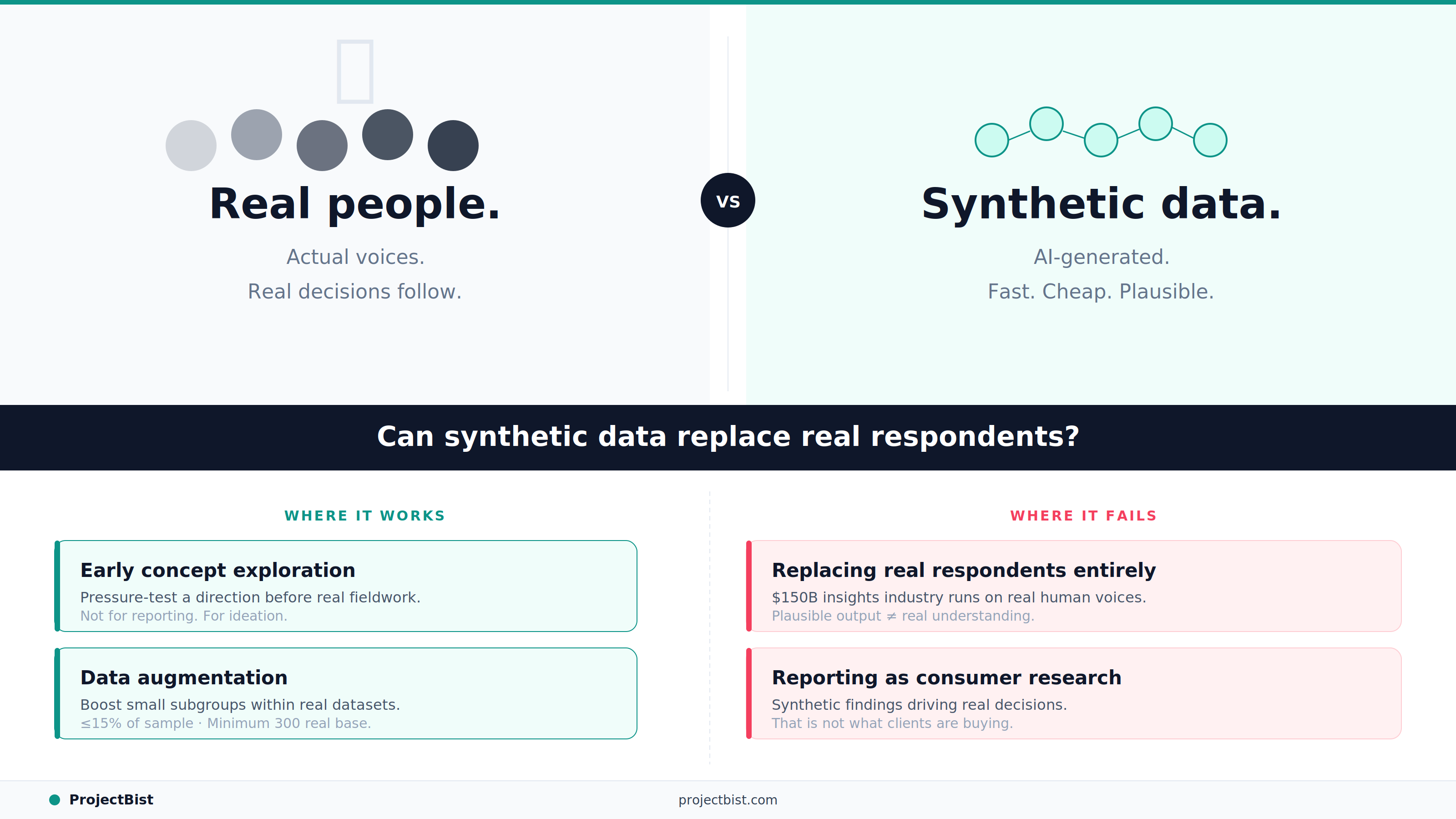

The most heated debate in market research right now is not about AI tools. It is about whether AI-generated survey responses can replace real people. Here is our honest read of where the evidence points.

Kwame Mensah

May 16, 2026•5 min read

In October 2023, marketing strategist Mark Ritson wrote in Marketing Week that AI's ability to simulate consumer responses had 'gigantic implications' for market research. The research industry responded with approximately the grace he predicted it would.

That exchange launched what has become the most contested debate in market research: can artificially generated data replace real survey respondents? And if so, when, and for what?

Eighteen months on, the industry is not much closer to a clean consensus. But the evidence has gotten clearer. Here is where things actually stand, without the evangelism or the defensiveness.

First: What People Mean When They Say Synthetic Data

The confusion in this debate starts with the term itself. In June 2025, the ICC/ESOMAR Code introduced the first official definition: data artificially generated to replace what would normally be collected directly from people. But even within that definition, there are at least five distinct things researchers are actually describing:

- Synthetic personas: AI-generated representatives of a target segment, used to explore how a prototypical customer might think about a concept. Useful for ideation, not for reporting findings.

- Data augmentation or boosting: adding synthetic respondents to an existing real dataset to increase sample size for underrepresented subgroups. The most technically defensible application.

- Simulated individual-level data: a completed survey dataset generated entirely by AI, with no real respondents. The most controversial and most scrutinized application.

- Digital twins: AI replicas of specific, known individuals built from their behavioral and transactional data. Powerful for scenario-testing but raises serious privacy concerns.

- Simulated conversations: AI-conducted interviews or focus group discussions with synthetic participants. Emerging and contested.

These are not the same thing. Calling all of them 'synthetic data' and evaluating them as one category is how the debate gets muddled.

What the Evidence Actually Shows

The most rigorous academic research on AI-simulated survey data has produced results that should give serious practitioners pause. A study comparing AI-generated responses to actual human survey data, referenced in Quirks Magazine, found that 48 percent of statistical coefficients estimated from AI responses were significantly different from their human counterparts. Among those cases, the direction of the relationship was reversed 32 percent of the time. This is not a small margin of error. This is systematically wrong in a way that could lead to opposite conclusions.

The problem is particularly acute for non-Western populations. AI language models are trained predominantly on English-language data from North American and European sources. When researchers use these models to simulate consumer perspectives from Latin America, Sub-Saharan Africa, or Southeast Asia, they are not getting authentic regional insight. They are getting a Western approximation wearing an international demographic label.

The most dangerous thing about synthetic data that looks plausible is that you cannot easily tell when it is wrong. A survey with bot responses at least leaves detectable patterns. An AI that confidently reverses a real-world relationship leaves you with confident, well-formatted nonsense.

Where Synthetic Data Does Have Genuine Value

The case for synthetic data is strongest in three specific contexts:

Early-stage concept exploration

A Harvard Business Review study found that AI can be useful for early-phase idea testing, where the goal is not to produce reportable findings but to quickly pressure-test a direction before investing in real fieldwork. Synthetic personas exploring whether a concept resonates enough to be worth investigating further is a reasonable use. Reporting those explorations as consumer research is not.

Data augmentation for rare populations

Where you have collected real data on 300 respondents from a target group that is genuinely hard to reach and need to make estimates for a specific subgroup of 40, carefully applied augmentation techniques can improve statistical reliability. The critical requirement, as noted by researchers at ESOMAR's 2025 Congress, is that augmentation models should only be applied to segments comprising 15 percent or less of the total sample, and only with at least 300 real respondents as the base.

Survey pretesting and instrument validation

Running synthetic responses through a survey before fielding it to real respondents can help identify confusing questions, skip logic errors, and implausible response patterns. This is analogous to using a test set before deployment.

Our Position

We think the most honest framing is this: synthetic data is a real innovation with real, bounded applications. It is not a replacement for the voice of actual human beings on questions that matter enough to invest research budget in. The $150 billion insights economy runs on research clients who need to make real decisions based on real understanding of real people. That is a different thing from fast, cheap, plausible output that sounds like what a target audience might say.

The researchers and firms on ProjectBist work on real fieldwork, real analysis, and real findings. The demand for that will not shrink because AI can simulate a focus group. It will shift. The work that requires genuine human judgment, contextual intelligence, and cultural fluency will become more visibly valuable precisely because the synthetic alternative exists.

FAQ

Is synthetic data approved by ESOMAR for market research?

In June 2025, the ICC/ESOMAR Code introduced an official definition of synthetic data and published guidance on responsible use. It does not prohibit synthetic data but requires transparency: buyers must be informed when synthetic data is used, and suppliers must disclose the methods and minimum viable data thresholds applied. It is not a blanket endorsement.

Can AI-generated synthetic data replace surveys?

The current academic evidence does not support this for quantitative research reporting. Studies have found that AI-simulated survey data reverses the direction of statistical relationships in a meaningful proportion of cases. For early-stage ideation and concept exploration, synthetic approaches have more defensible uses.

What is a digital twin in research?

A digital twin is an AI-generated replica of a specific, real individual, built from their behavioral data, purchase history, and survey responses. It is distinct from a synthetic persona, which represents a generalized demographic segment. Digital twins raise significant privacy and data security concerns, including the risk of inadvertent exposure of sensitive personal information.

Sources

- •Quirks — Synthetic Respondents and the Future of Survey Research (2025);

- •Greenbook — The Death of the Survey? (2024);

- •ESOMAR Congress 2025 Synthetic Data Recap;

- •Qualtrics — Synthetic Data Market Research FAQ (2026);

- •IdSurvey — Synthetic Data and Personas in Market Research;

- •Harvard Business Review — Using Gen AI for Early-Stage Market Research (July 2025)

Newsletter

Get ProjectBist research notes in your inbox

Personalize your updates! Subscribe to ProjectBist's Newsletter and choose from the following categories.

Related Articles

View all

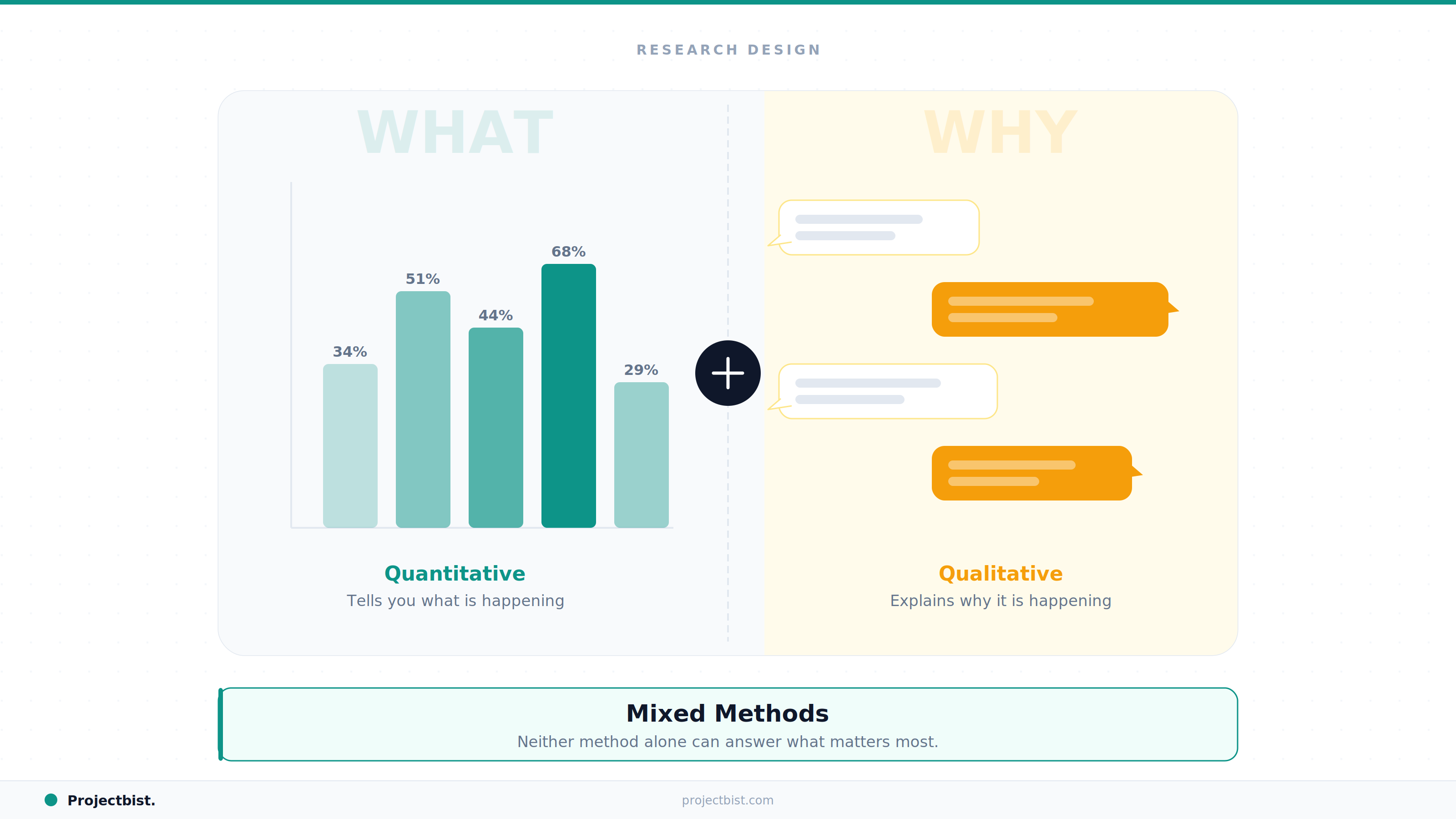

Why Mixed Methods Research Is Becoming the Standard in Development and Social Research

Six Things Clients Get Wrong When Commissioning Qualitative Research

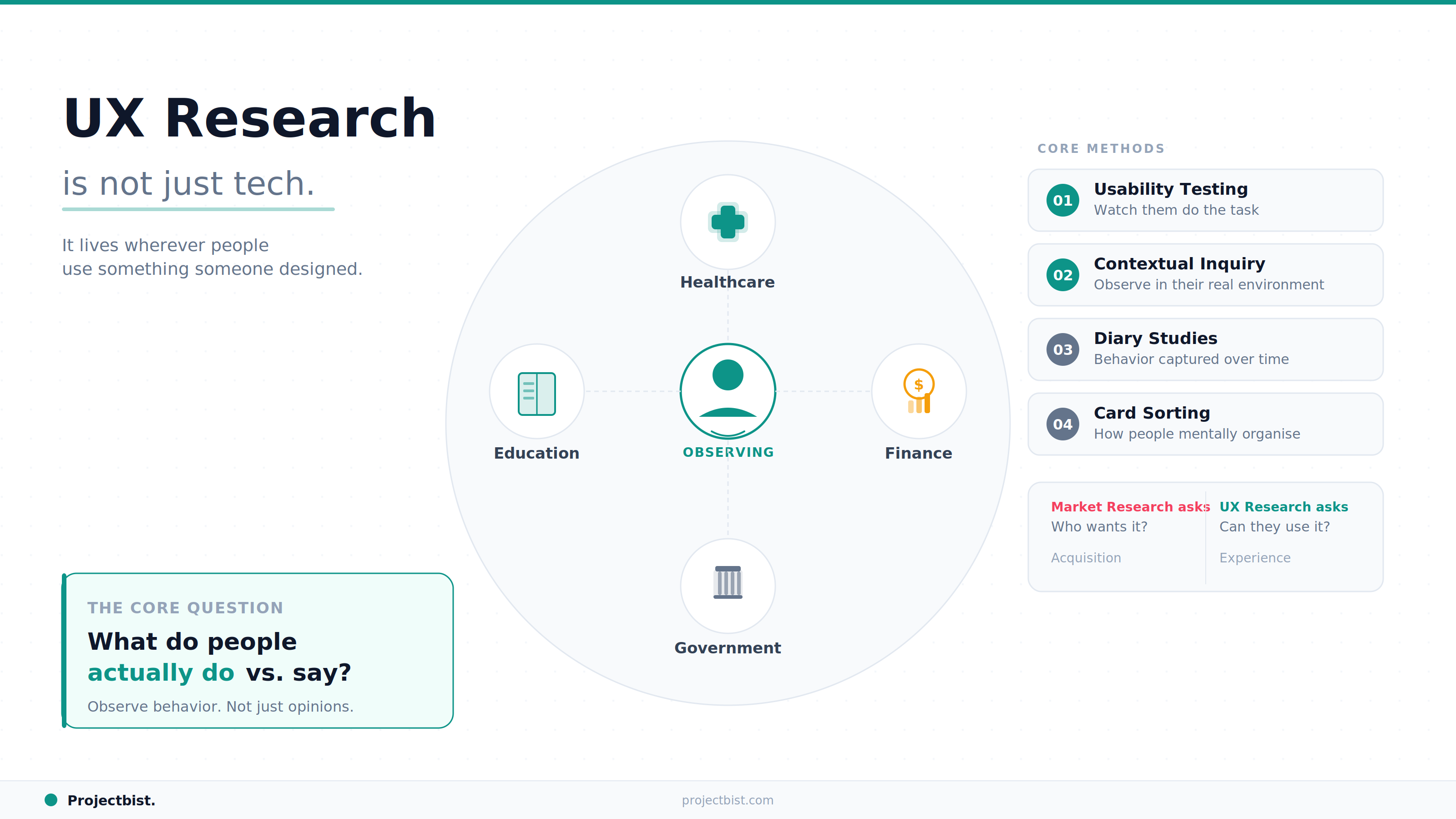

UX Research Is Not Just Tech. Here Is Why It Is One of the Fastest-Growing Research Disciplines Globally