Loading blog...

Fake Data Is a Real Problem in Research. Here Is How the Industry Is Fighting It

Research fraud is not rare. It is systematic, and it is getting more sophisticated. The good news is the tools to catch it are getting better too.

Samir Haddad

Apr 04, 2026•3 min read

If you have ever run an online survey and had the nagging feeling that some of the responses did not quite feel human, you were probably right.

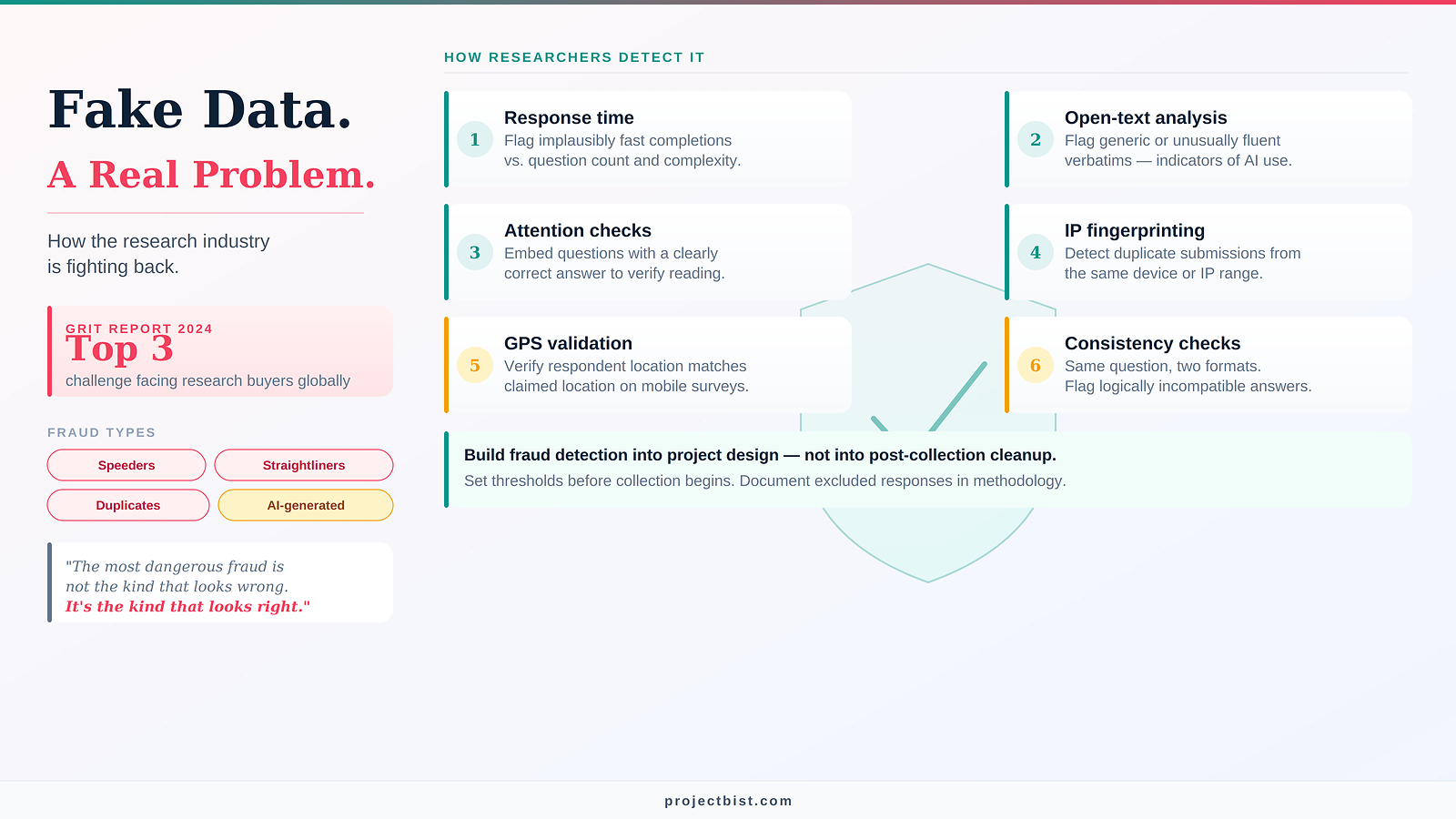

Research fraud is one of the least discussed quality problems in the industry, partly because it is embarrassing to acknowledge and partly because it is difficult to quantify. But the Greenbook GRIT 2024 Report found that data quality concerns are now among the top three challenges facing research buyers globally. And with AI making it trivially easy to generate plausible-looking survey responses at scale, the problem is not getting smaller.

What Research Fraud Actually Looks Like

Not all fraudulent data comes from sophisticated operations. Most of it falls into four categories:

- Speeders: respondents who complete a 20-minute survey in four minutes, clicking through without reading questions. The data looks complete but is essentially random.

- Straightliners: respondents who select the same response option for every matrix question. Quick to complete, statistically destructive.

- Duplicate respondents: the same person completing the survey multiple times under different identities to collect multiple incentive payments.

- AI-generated responses: increasingly common since 2023, where large language models are used to generate survey responses that are linguistically plausible but not grounded in genuine human experience or behavior.

The most dangerous research fraud is not the kind that looks obviously wrong. It is the kind that looks exactly right.

How Researchers Are Detecting It

The research industry has developed a layered approach to fraud detection, and the most rigorous studies apply multiple checks simultaneously:

- Response time monitoring: flagging completions that fall below a statistically implausible minimum based on question count and complexity.

- Open-text analysis: reviewing verbatim responses for generic, unusually fluent, or off-topic text that may indicate AI generation.

- Attention checks: embedding questions that have a clearly correct answer (or explicitly instruct respondents to select a specific option) to verify that respondents are reading.

- IP and device fingerprinting: detecting duplicate submissions from the same device or IP address range.

- GPS and metadata validation: for mobile surveys, verifying that the respondent's physical location matches the claimed location.

- Consistency checks: asking the same substantive question in two different formats and flagging respondents whose answers are logically incompatible.

What Research Firms Should Be Doing

Fraud detection should be built into the project design, not added as an afterthought during analysis. That means:

- Setting minimum response time thresholds before data collection begins and excluding completions that fall below them.

- Using panel providers that have implemented device fingerprinting and duplicate detection on their end.

- Building open-text questions into surveys where AI-generated responses are a concern, and reviewing them before finalizing the data.

- Reporting excluded responses in the methodology section of the final report, with the exclusion criteria documented.

FAQ

How do I know if my completed dataset already contains fraudulent responses?

Run the checks described in this post against your existing data. Start with response times: flag any completions that fall below the minimum plausible time for your survey length. Then review open-text responses for generic or unusually polished language. Cross-check matrix questions for straightlining. If your panel provider can supply IP and device data, check for duplicate submissions. Any respondents flagged on two or more criteria should be excluded and documented in your methodology.

Do these fraud risks apply to face-to-face and phone surveys, or only to online surveys?

Face-to-face and phone surveys carry different fraud risks. The primary concern is interviewer fabrication, where an interviewer fills in responses without completing the actual interview, rather than respondent fraud. Back-checks, where a sample of respondents are re-contacted to verify the interview took place, are the standard control. GPS-logged field submissions and audio recording of a sample of interviews also serve this purpose. The risk is different in form but equally real.

Should excluded responses be reported in the final research report?

Yes, always. Any responses excluded for quality reasons should be reported in the methodology section with the exclusion criteria documented clearly. This includes the number excluded, the criteria applied, and the stage at which exclusions were made. This transparency protects the integrity of the findings and allows clients and reviewers to assess the impact of exclusions on the final sample.

The Verification Dimension

For face-to-face and phone research, fraud takes different forms but the same principle applies: respondents or interviewers fabricating data rather than collecting it. This is why interviewer monitoring, back-checks (re-contacting a sample of respondents to verify the interview took place), and GPS-logged field submissions are standard quality control practice in professional fieldwork operations. Platforms like ProjectBist support this through verified researcher profiles where credentials, past project experience, and client ratings are documented, reducing the risk of engaging field teams without a traceable quality track record.

Sources: Greenbook GRIT Report 2024; Qualtrics Data Quality Best Practices 2025; ESOMAR Quality in Research Guidelines

Newsletter

Get ProjectBist research notes in your inbox

Personalize your updates! Subscribe to ProjectBist's Newsletter and choose from the following categories.

Related Articles

View all

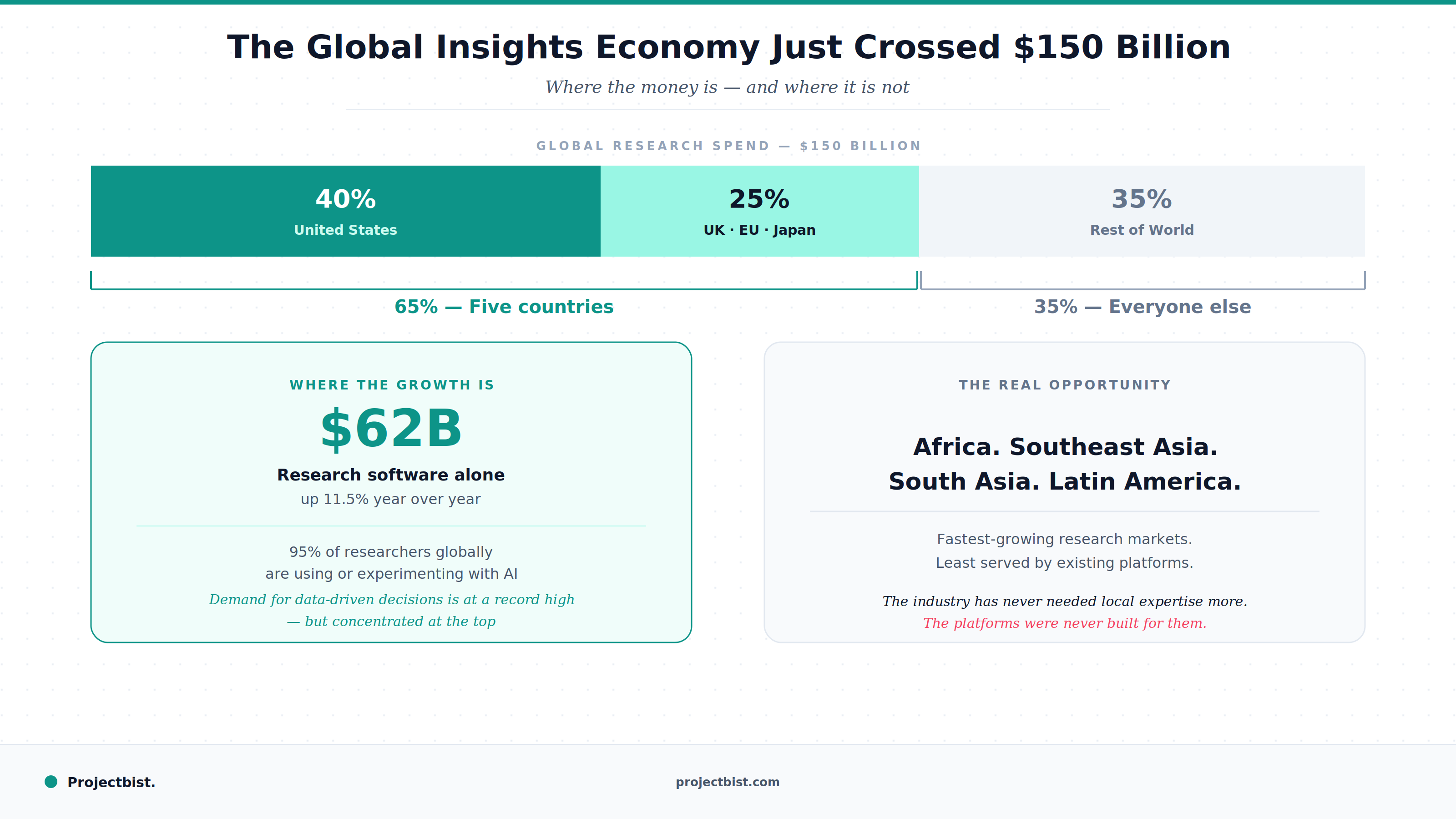

The Global Insights Economy Just Crossed $150 Billion. Here Is What That Actually Means for Research Professionals

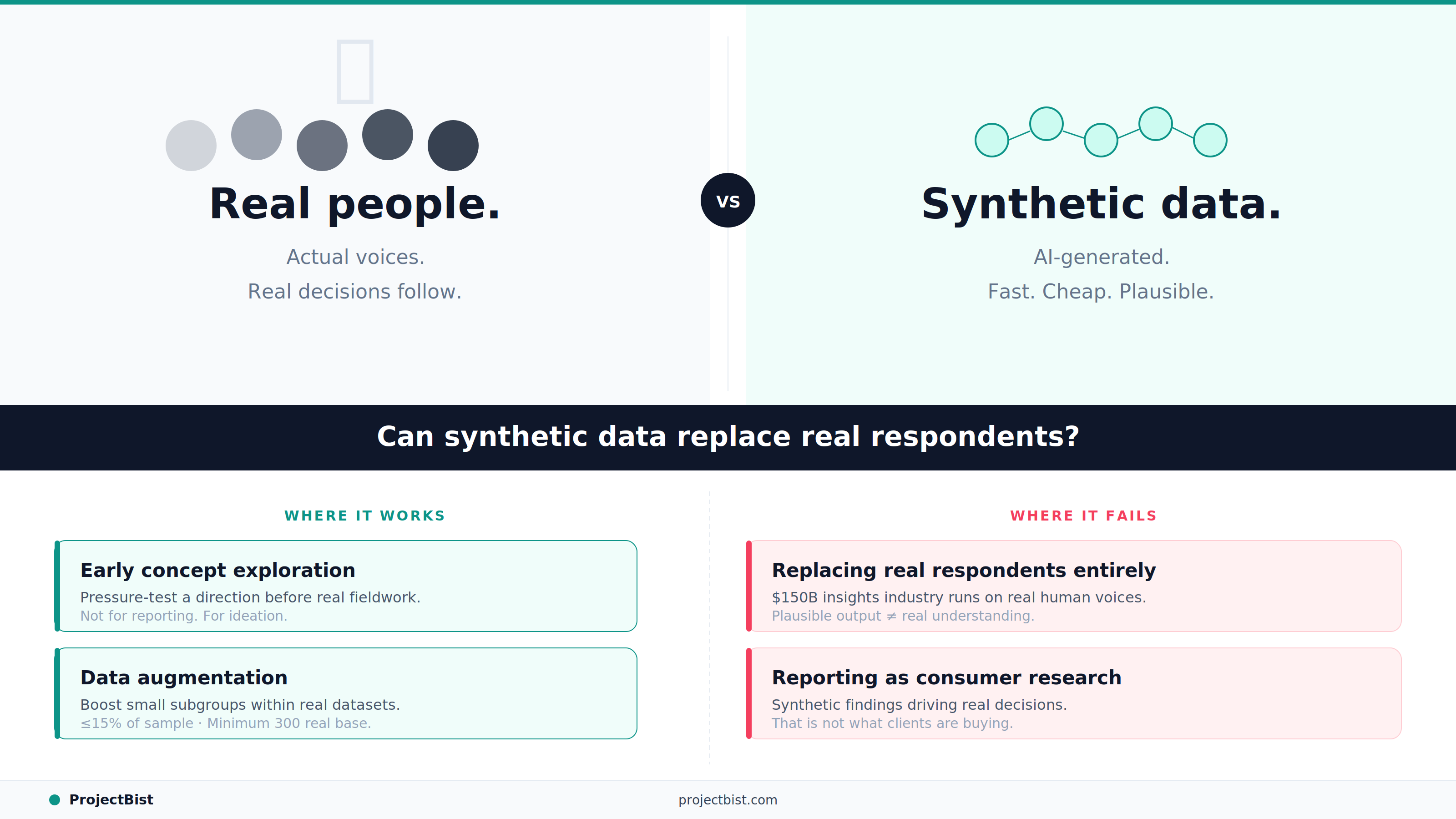

Synthetic Data in Market Research: The Promise Is Real. So Are the Risks. Here Is Where We Stand.

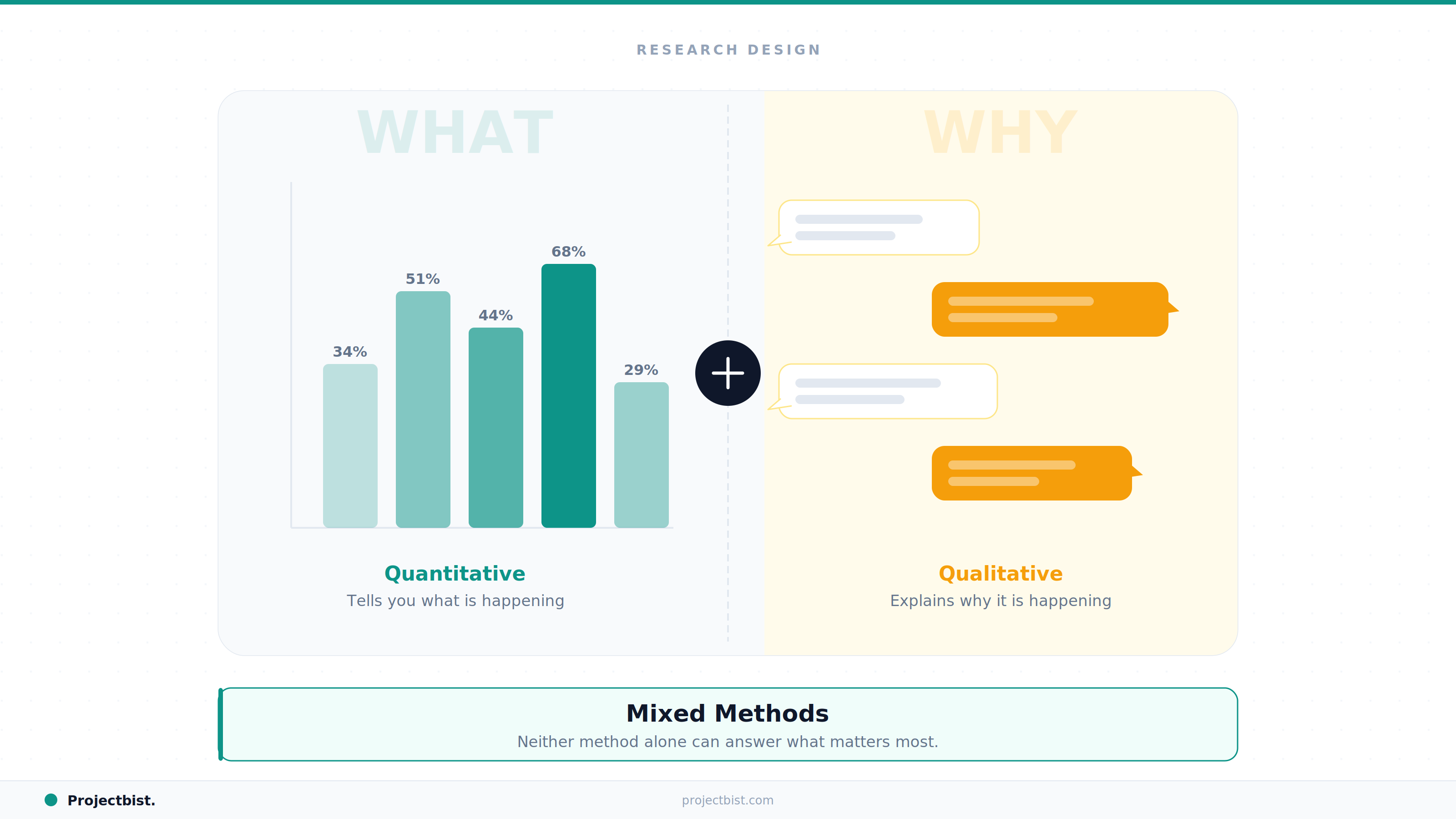

Why Mixed Methods Research Is Becoming the Standard in Development and Social Research