Loading blog...

The Data Collector Problem No One in Research Talks About Enough

Research firms know this problem well. They have just not had a structural way to solve it.

Amina Idris

Apr 14, 2026•4 min read

Here is a scenario that anyone who has managed field research will recognize.

You hire a team of data collectors for a household survey. They come recommended by someone your colleague worked with on a previous project. Three weeks in, you start noticing anomalies in the data: response patterns that look suspiciously uniform, GPS timestamps that suggest interviews lasted two minutes, duplicate records with slightly different phone numbers. You raise it with the team. One collector disappears. You discover later that this person had done the same thing on a project for a different firm six months earlier.

Nobody warned you. There was nowhere to check.

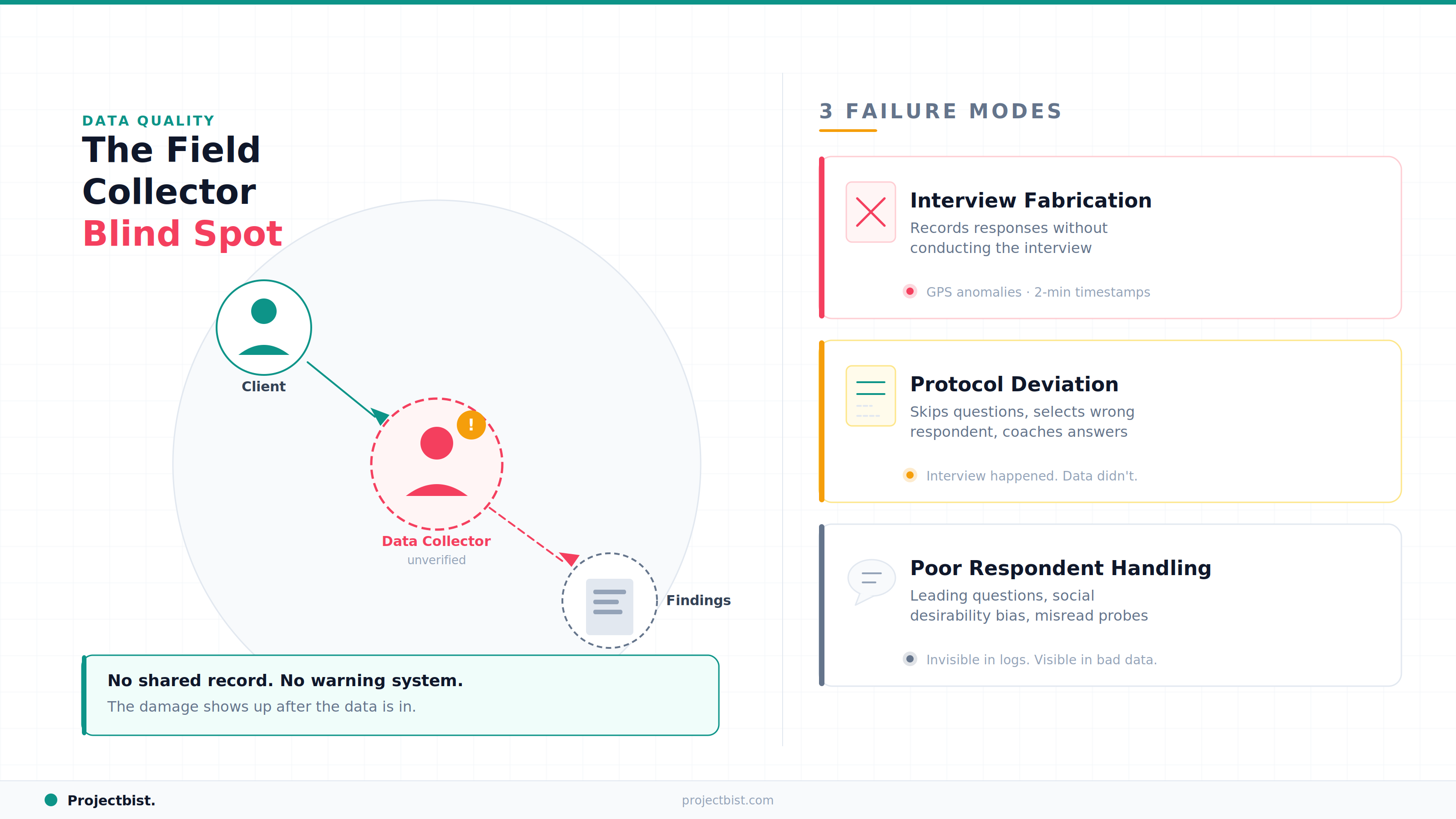

Why Data Collectors Are the Research Industry's Blind Spot

Research firms operate at the project level. When a project ends, the data collector moves on to the next engagement, potentially with a different firm, and their quality record from your project disappears with them. There is no shared infrastructure for research professionals globally to record and access data collector performance history across engagements.

This is a real and documented problem. A Mathematica analysis of survey data collection challenges in Sub-Saharan Africa noted that many local data collection teams are not formally trained in survey methodology, and that the consequences of poorly trained interviewers often go undetected until analysis begins. By then, the damage is done.

A data collector who fabricates interviews does not just waste budget. They undermine every finding the project produces. Bad data does not just limit a report. It shapes decisions built on false evidence.

The Three Specific Failure Modes

1. Interview Fabrication

The collector records responses without conducting the interview. This is the most severe form of data fraud, and it is harder to detect than people assume. GPS logging catches location inconsistencies. Call duration monitoring catches impossible timings. But in paper-based fieldwork or in environments where digital quality checks are not in place, fabrication can be invisible until the data is analyzed at scale.

2. Non-Adherence to Protocol

The collector conducts interviews but does not follow the data collection protocol correctly: skipping sensitive questions to avoid awkwardness, recording the household head's responses instead of the randomly selected respondent, or coaching respondents toward preferred answers. This type of failure is more common and harder to detect because the interview technically took place.

3. Poor Respondent Handling

Leading respondents, not reading questions correctly, failing to probe properly on open-ended responses, or conducting interviews in a way that makes respondents uncomfortable and produces social desirability bias. These failures do not show up in GPS logs or call duration reports. They show up as subtle biases in the data that often only become visible when findings seem unexpectedly consistent or when qualitative validation shows divergence from the survey results.

What Has Been Missing: A Shared Accountability Structure

The reason this problem persists is not that research firms do not care about data quality. Most do, deeply. The reason is that there has been no shared infrastructure for the research industry to collectively document and access the performance history of data collectors across projects and organizations.

When a data collector joins a project on a platform like ProjectBist, their past engagement history and client ratings are part of their permanent profile. A rating submitted by one firm is visible to the next firm considering hiring them. A data collector who has consistently delivered quality work builds a record that gives new clients confidence. A data collector who has collected poor-quality data or been flagged for protocol violations carries that record forward.

This is what social accountability looks like for a professional workforce. It works because it cannot be hidden. A data collector who knows their performance is recorded and visible has a material incentive to work with integrity, even on a project-by-project basis.

What This Means in Practice

For research firms, it means the next time you need to staff a fieldwork project, you do not have to start from zero with referrals. You can search the database, review profiles, read client ratings from comparable projects, and hire with a level of informed confidence that the current system does not provide.

For data collectors who do good work, it is an opportunity. Your track record becomes a professional asset that earns you more projects and better rates.

Find verified data collectors with documented fieldwork track records.

ProjectBist's database includes data collectors with client ratings from completed projects.

Browse Data Collectorsarrow_forwardNewsletter

Get ProjectBist research notes in your inbox

Personalize your updates! Subscribe to ProjectBist's Newsletter and choose from the following categories.

Related Articles

View all

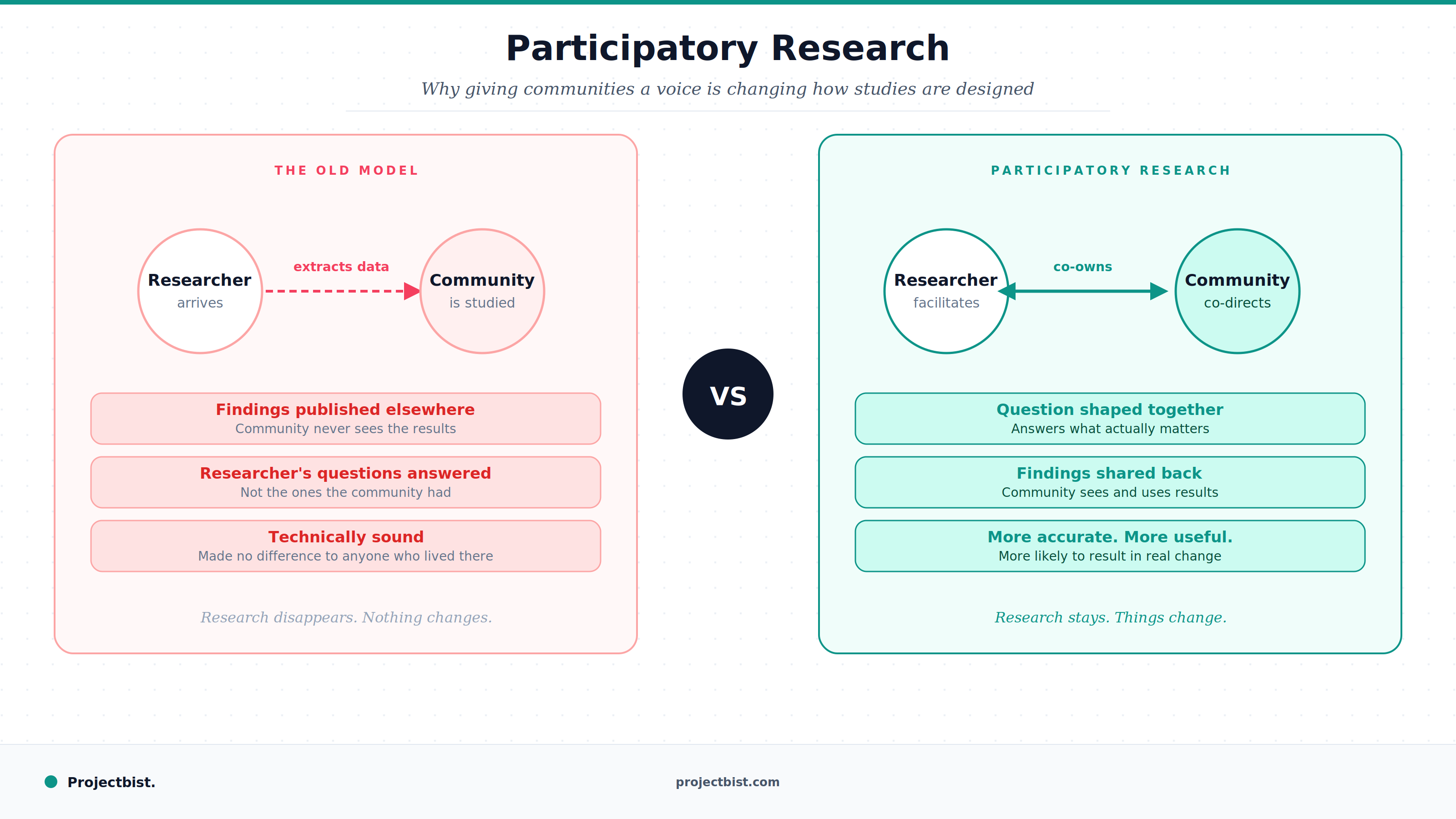

Participatory Research: Why Giving Research Subjects a Voice Is Changing How Studies Are Designed

ESG and Climate Research: Why This Is the Fastest Growing Research Specialization Almost Nobody Is Talking About

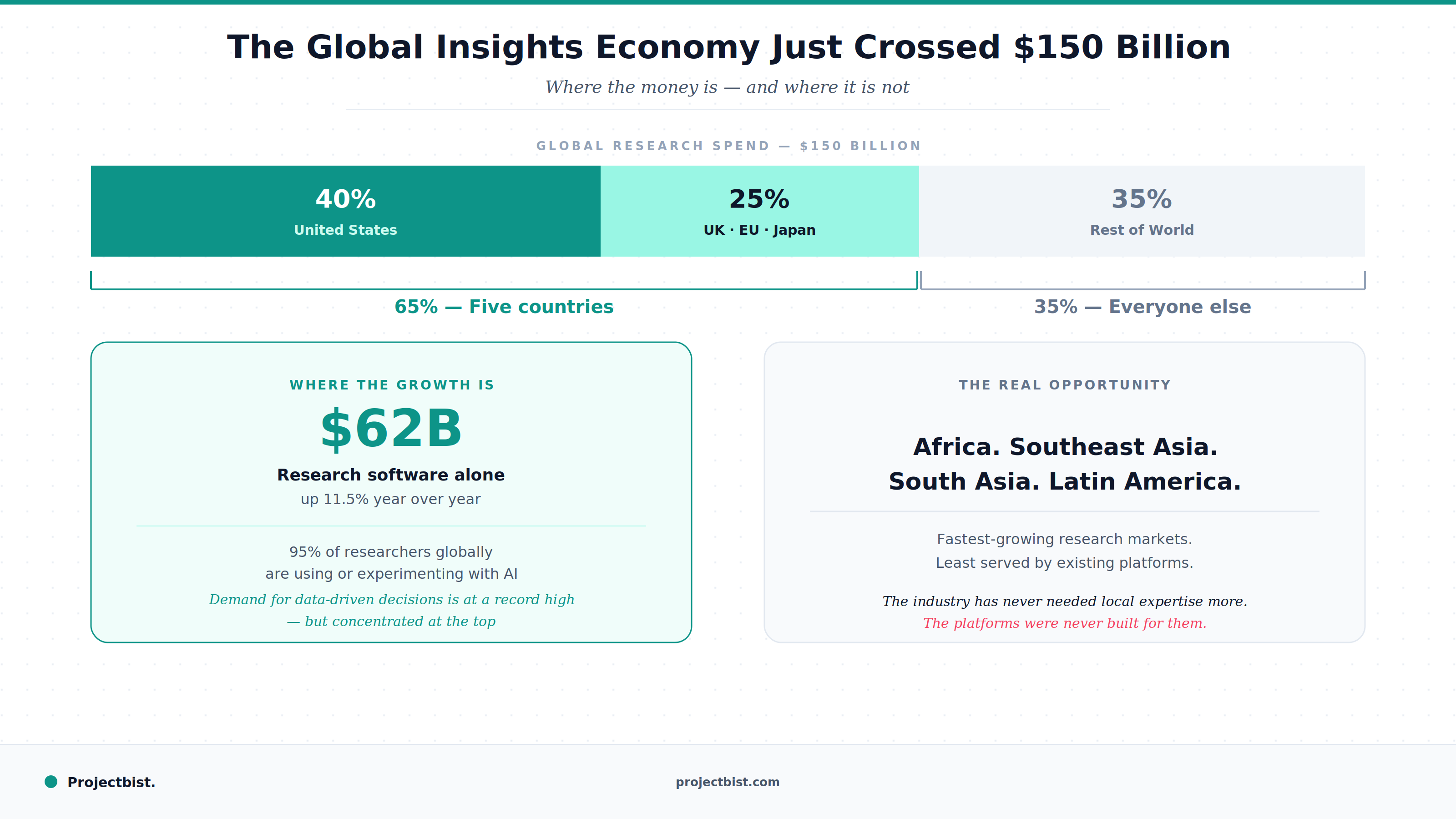

The Global Insights Economy Just Crossed $150 Billion. Here Is What That Actually Means for Research Professionals