Loading blog...

How AI Is Changing the Research Workflow in 2026 (And What It Still Cannot Do)

AI is not replacing research professionals. It is redistributing where they spend their time. That matters enormously for how the field evolves.

Samir Haddad

May 01, 2026•4 min read

There is a version of the AI-in-research story that sounds alarming: machines will code your transcripts, generate your themes, write your reports, and conduct your interviews. Researchers will become reviewers of machine output rather than originators of insight.

There is a more accurate version: AI has made a specific set of research tasks dramatically faster, and it has made a different set of tasks more visibly and valuably human. The researchers who understand which category each task falls into are positioning themselves well. The ones treating this as a threat rather than a shift are not.

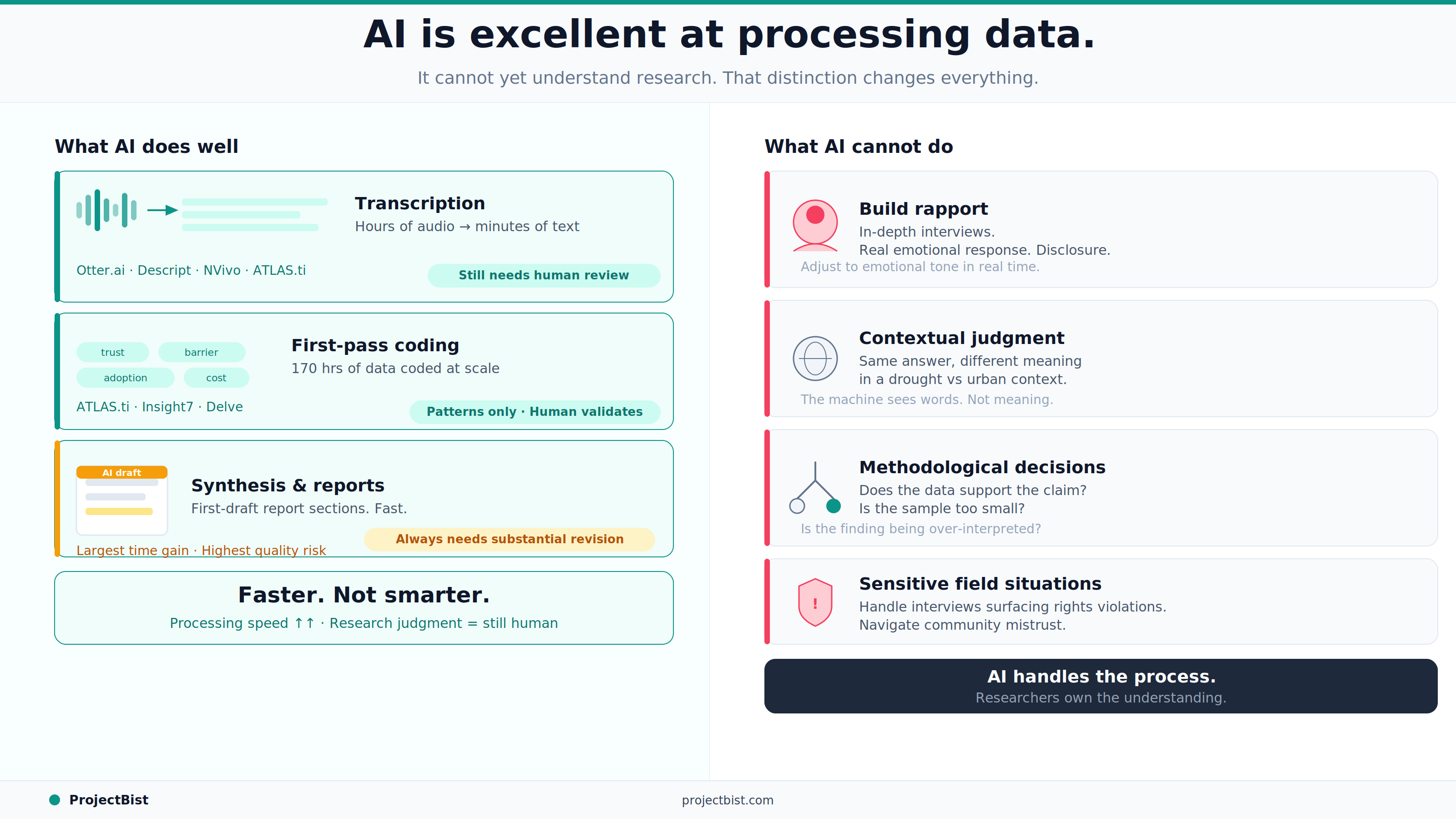

What AI Is Genuinely Doing Well in Research Workflows

1. Transcription

Automatic transcription has been one of the most practically significant AI contributions to qualitative research. Tools like Otter.ai, [Descript](

https://www.descript.com), and the transcription modules built into NVivo and ATLAS.ti can produce first-draft transcripts from audio in minutes rather than hours. At typical transcription rates of two to four hours per hour of audio for human transcribers, this is an enormous time saving.

The limitation is accuracy, particularly in multilingual settings, for respondents with strong accents or dialect variation, or in environments with background noise. AI transcription in these contexts produces faster but less accurate text that requires significant human correction. In development and social research contexts across Africa and Asia, human transcription or hybrid approaches remain necessary for most fieldwork.

2. First-Pass Coding

AI tools can now perform first-pass coding of qualitative transcripts at scale. Tools like ATLAS.ti's AI features and purpose-built platforms like Delve, Insight7, and newer entrants can apply inductive codes across large datasets in minutes. A researcher at Stanford and Harvard published findings in early 2025 showing that AI-assisted coding of 170 hours of interview data from 12 clinical sites was feasible where fully manual coding would not have been given the project's timeline.

The important qualification, documented consistently across research, is that AI first-pass coding requires careful human review. The machine identifies patterns in language. The researcher determines whether those patterns are analytically meaningful, whether they reflect genuine themes or surface-level similarity, and whether the coding aligns with the actual research question.

3. Synthesis and Summarization

AI tools are increasingly used to generate thematic summaries from coded qualitative data, produce first-draft report sections, and synthesize findings from multiple sources. This is where the productivity gain is largest and where the quality risk is also highest.

AI-generated summaries reflect patterns in the text. They do not reflect the researcher's understanding of the context, the client's decision-making situation, or the analytical framework that makes certain patterns more significant than others. Every AI-generated synthesis used in a professional research product requires substantial human revision before it becomes a research finding.

AI is excellent at processing data. It is not yet capable of understanding research. The distinction matters enormously for what you can delegate and what you cannot.

What AI Still Cannot Do

- Build rapport with a respondent. Conduct an in-depth interview in a way that creates the conditions for honest, deep disclosure. Adjust to the emotional tone of a conversation in real time.

- Apply contextual judgment. Understand that a respondent's answer about food security means something different in a drought-affected region than in an urban middle-class neighborhood.

- Make methodological decisions. Determine whether the data supports the conclusion the client wants to draw. Identify when findings are being over-interpreted. Know when the sample is too small to say what the report is claiming.

- Navigate sensitive field situations. Handle an interview that surfaces evidence of human rights violations, or determine what to do when a respondent's safety may be at risk.

What This Means for Research Professionals

The tasks that AI is taking over are largely the mechanical ones: transcription, initial sorting, first-pass coding, drafting. These are tasks that consumed significant time but were not where the core research value was generated.

The tasks that AI is making more visible as distinctly human are the interpretive ones: understanding context, making analytical judgments, translating findings into implications, building client relationships. These are the tasks where senior research professionals have always created the most value.

The shift is toward fewer hours on mechanical processing and more hours on high-judgment work. For experienced researchers, that is a good deal. For researchers who were coasting on process management rather than genuine insight generation, it is a challenge.

For research firms positioning in this environment, verified expertise in specific sectors and methodologies, documented through platforms like ProjectBist, is becoming more valuable, not less. Clients choosing a researcher increasingly need confidence in the human judgment behind the work, not just the tools being used to execute it.

Newsletter

Get ProjectBist research notes in your inbox

Personalize your updates! Subscribe to ProjectBist's Newsletter and choose from the following categories.

Related Articles

View all

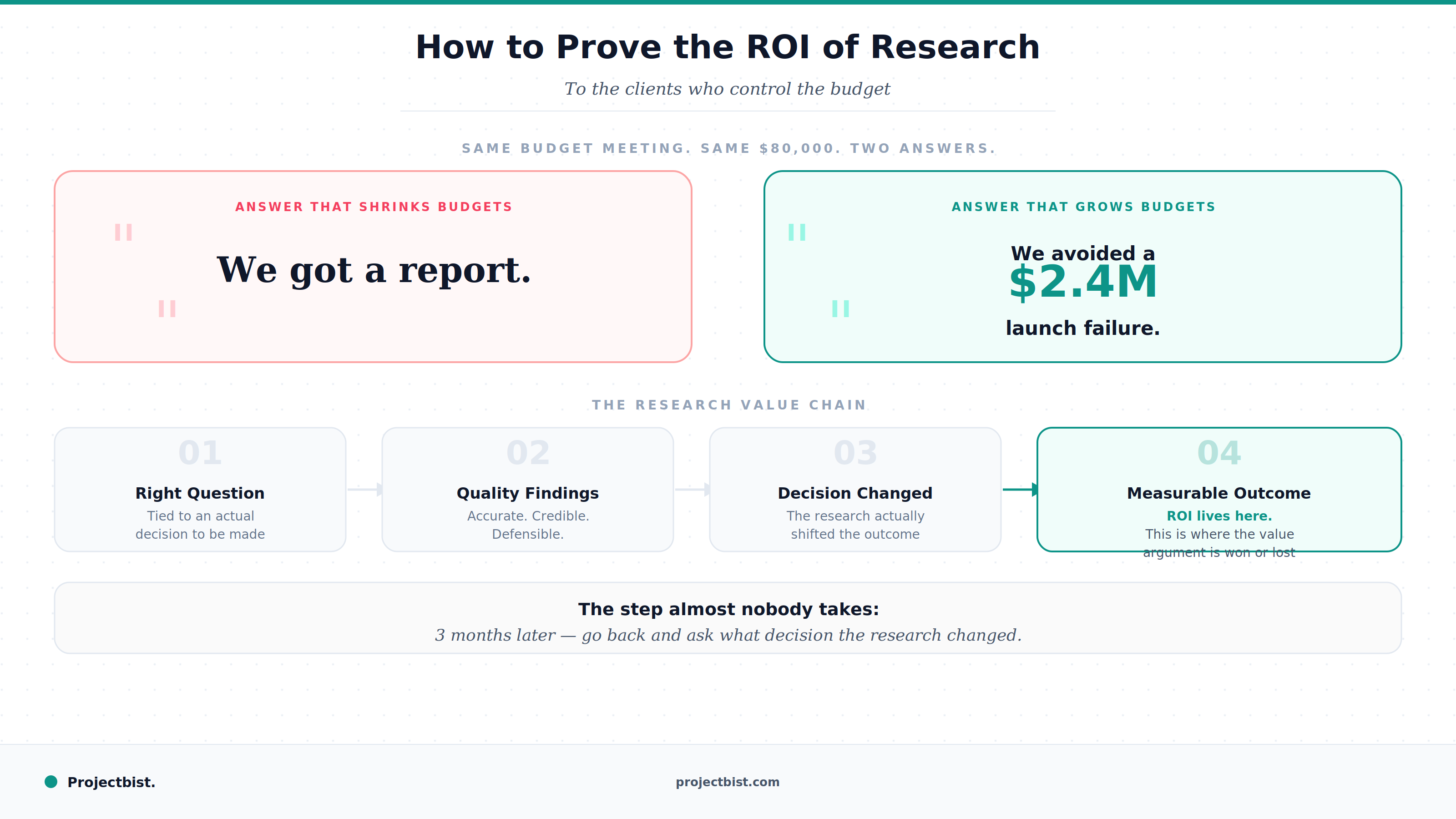

How to Prove the ROI of Research to Clients Who Control the Budget (And Why This Skill Has Never Mattered More)

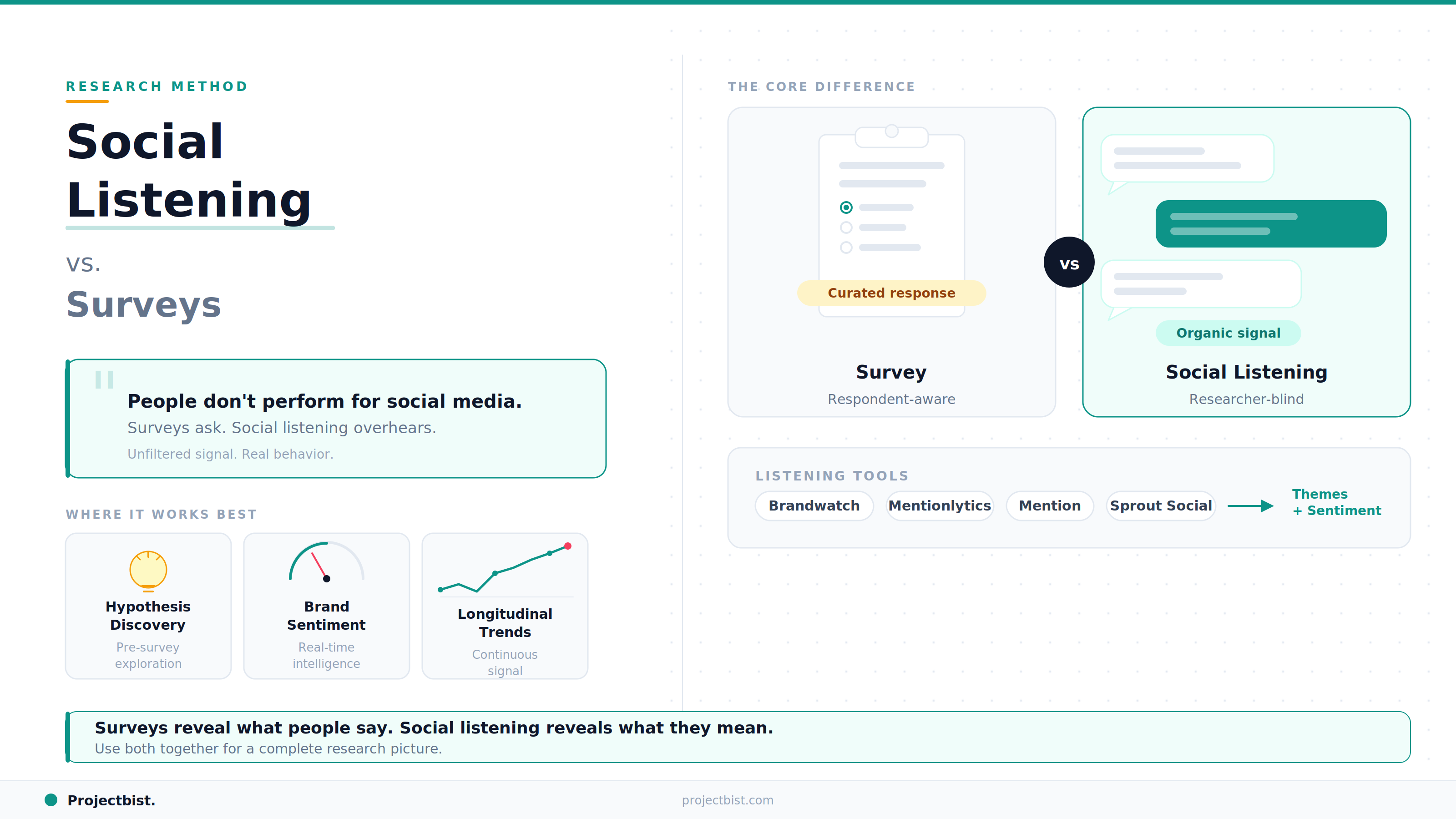

Social Listening as a Research Method: What It Can Tell You That Surveys Cannot

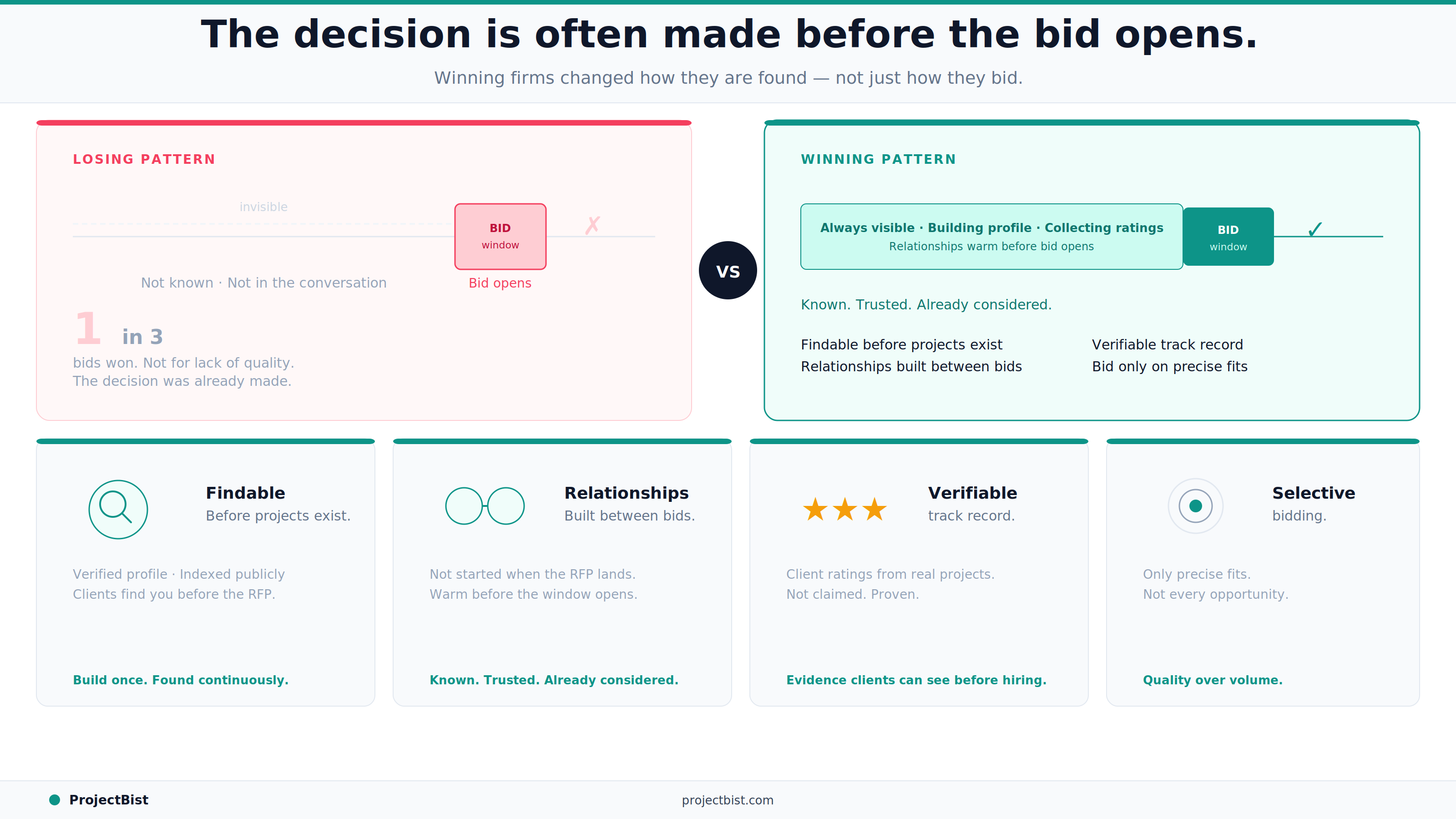

Why Research Firms Struggle to Win Bids (And What the Ones Winning Consistently Do Differently)